Strategies for getting feedback on your documentation

Most documentation feedback arrives too late. After users already struggled.

You publish documentation. Users struggle with it, give up, or contact support. Eventually someone tells you the docs were confusing, but by then dozens of users have already hit the same problem.

Here are strategies for catching documentation issues earlier. Ordered by impact.

Pre-publication review

Get feedback before documentation goes live. Get up to three reviewers with different perspectives: a subject matter expert (catches technical errors), a technical writer (checks clarity and style), and someone without context (tests if it actually works).

You don’t need all three. Even one reviewer helps. The key: tell them what to focus on. “Check technical accuracy” is clearer than “review this.”

Not everything needs the same level of review. A new tutorial deserves a thorough look. A typo fix in a reference page? Quick scan is fine.

This breaks down when deadlines are tight or reviewers are unavailable. But preventing issues is always cheaper than fixing them after users complain. Schedule review time upfront because teams always forget it in estimates.

Strategy 2: Monitor communication channels

Watch where users discuss your documentation. Support tickets, forums, GitHub issues, social media, internal Slack. Look for patterns:

“The docs don’t explain X.” “I couldn’t figure out how to Y.” “Spent 2 hours on Z before...”

When you spot problems, create documentation issues from them. Track them and fix them.

This should be your baseline. Users talk about your documentation whether you listen or not. The feedback is unfiltered. They’re not trying to be nice, they’re venting or asking for help, which makes it valuable.

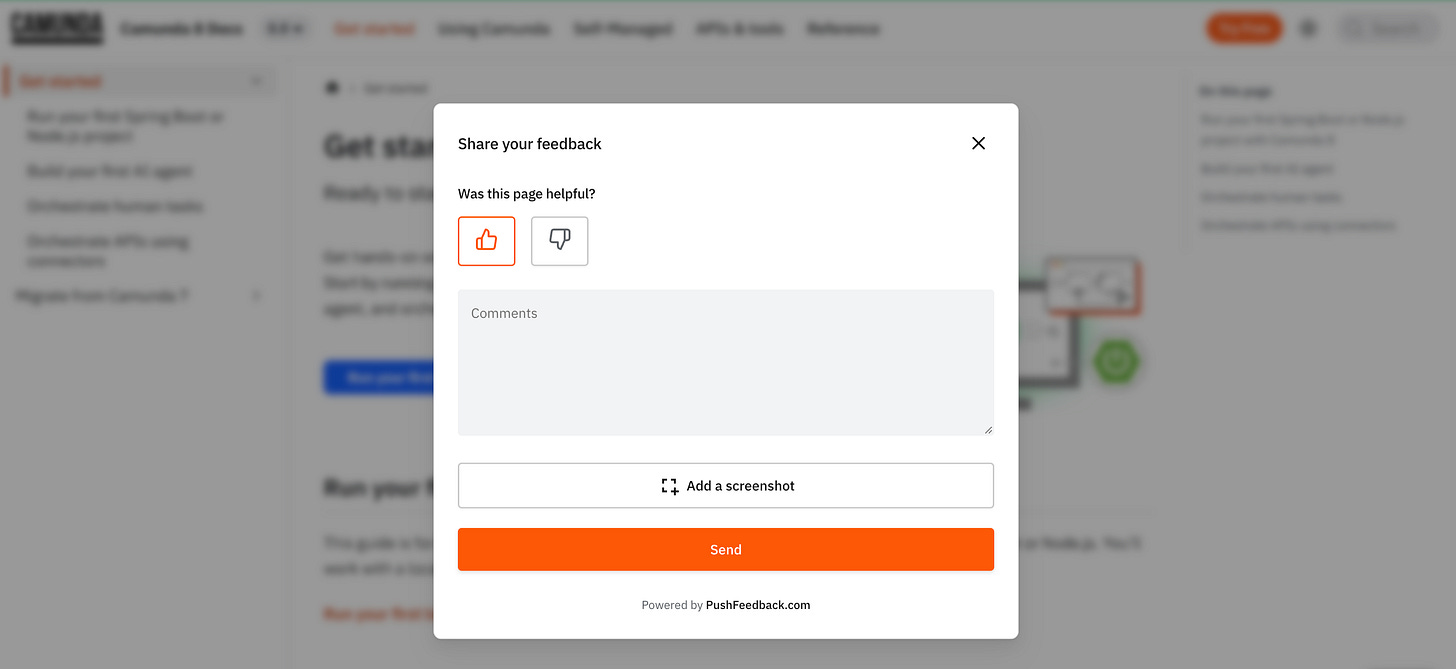

On-page feedback widget

Put a feedback mechanism directly on the documentation page. Users click a button, highlight a section, and say what’s wrong. Anonymous, no account needed, feedback routes to your issue tracker.

Tools like PushFeedback let users annotate specific sections visually. They see the issue, highlight it, leave a comment. You get a screenshot with context.

If you have an AI chatbot on your docs, review what users ask it. Questions the chatbot couldn’t answer point to missing documentation. Questions asked repeatedly point to unclear documentation. If 50 users ask about webhook security in a month, that’s a signal to write that guide.

The alternative is linking to GitHub issues. Works for developer-heavy audiences, costs nothing. But most users won’t leave the page, create an account, and give public feedback.

User testing sessions

Watch someone use your documentation in real-time. Don’t explain anything. Don’t help them. Just watch where they pause, re-read, or give up.

This works well for complex workflows, multi-step tutorials, and onboarding documentation. Simple reference docs have nothing to “test.”

We helped Finboot run an “Escape Room” using their docs. The company split into teams and tried to solve a puzzle by following documentation: sending blockchain transactions in the correct sequence. Everyone experienced the pain points firsthand. Inconsistencies, ambiguous instructions, missing steps. The whole company became motivated to fix it.

Intensive to set up, but it makes abstract documentation problems concrete.

User interviews

Schedule time with users who recently used your docs. Ask what worked and where they got stuck.

Interviews reveal things other methods miss. The specific sentence that confused them. The assumption they made that broke everything. But they don’t scale. External users at scale are hard to schedule, and anonymous user bases can’t be reached.

Best for internal documentation and B2B products where you know your users.

Surveys

Ask broad questions. “How helpful is our documentation?” “What topics are missing?”

Surveys give you trends and general sentiment. They don’t give you actionable issues. Users don’t remember which specific guide confused them two weeks ago.

Good for measuring documentation health over time. Not for finding specific problems.

Where to start

Most teams start with pre-publication review and monitoring. Those two cover the most ground with the least effort.

Then add other strategies based on your constraints: budget, team size, user base, and how much traffic your docs get.

Your documentation improves when you stop waiting for complaints and start actively seeking problems.